We Analyzed robots.txt Across Cloudflare's Network: Which AI Crawlers Get Blocked Most and Why (Q1 2026)

Q1 2026 analysis of robots.txt across Cloudflare's network reveals which AI crawlers get blocked most, crawl-to-refer ratios by operator, blocking trends, and a data-driven framework for your AI bot policy.

Published •27 min read

After analyzing robots.txt directives across Cloudflare's global network in Q1 2026, we found that GPTBot is the most blocked AI crawler, Anthropic's ClaudeBot crawls 20,583 pages for every single referral it returns, and 89.4% of all AI crawler traffic serves training or mixed purposes rather than search. These findings reshape how every website owner should think about their robots.txt AI crawlers policy.

Key findings from our Q1 2026 robots.txt analysis:

- GPTBot is the most blocked AI crawler, appearing in more DISALLOW rules than any other AI bot, followed by CCBot, ClaudeBot, and Google-Extended

- Anthropic's crawl-to-refer ratio is 20,583:1 — ClaudeBot crawls 20,583 pages for every single referral it sends back to publishers. OpenAI is 1,255:1. Meta sends zero referrals.

- 89.4% of AI crawler traffic is training or mixed-purpose — only 8% is search-related and just 2.2% responds to actual user queries

- 30.6% of all web traffic is bots — AI crawlers now account for a growing share. Googlebot alone generates 35.2% of all AI-categorized bot traffic.

- Retail absorbs 28.1% of AI crawling — more than double any other industry. Computer Software is second at 13.6%.

- ClaudeBot blocking grew fastest during Q1 — its share of DISALLOW rules rose from 9.6% in January to 10.1% by March, while GPTBot stayed flat

- Some AI bots are explicitly welcomed — PerplexityBot and ChatGPT-User appear more in ALLOW rules than DISALLOW, likely because they return traffic

Every robots.txt file is a policy decision. Allow this bot. Block that one. Ignore the rest. But most website owners are making these decisions with almost no data. We decided to fix that.

As CTO of TechnologyChecker.io, I oversee the crawling infrastructure that scans 50 million domains monthly. We see robots.txt files at scale every single day. But to understand the full picture, not just what our crawler encounters but what the entire internet is doing, we pulled Q1 2026 data from Cloudflare Radar, which aggregates traffic patterns across 81 million HTTP requests per second and 67 million DNS queries per second through 330 cities in 125+ countries.

Here's what the data actually says.

Which AI crawlers are blocked most in robots.txt?

GPTBot leads all AI crawlers in DISALLOW rules, but it also leads in ALLOW rules. The internet is genuinely split on OpenAI's bot. We pulled robots.txt directive data from Cloudflare Radar for the full Q1 2026 period (January 1 through March 31). Among all domains with DISALLOW rules targeting AI crawlers, here's the share each bot accounts for:

| AI Crawler | Operator | DISALLOW Share | ALLOW Share | Net Sentiment |

|---|---|---|---|---|

| GPTBot | OpenAI | 5.52% | 5.65% | Mixed — blocked AND allowed frequently |

| CCBot | Common Crawl | 5.08% | — | Mostly blocked |

| ClaudeBot | Anthropic | 4.88% | 4.24% | Slightly more blocked than allowed |

| Google-Extended | 4.44% | 4.29% | Slightly more blocked than allowed | |

| Bytespider | ByteDance | 4.23% | — | Mostly blocked |

| meta-externalagent | Meta | 3.82% | — | Blocked, never explicitly allowed |

| Amazonbot | Amazon | 3.80% | — | Mostly blocked |

| Applebot-Extended | Apple | 3.67% | — | Mostly blocked |

| Googlebot | 2.92% | 9.40% | Overwhelmingly allowed |

On a single day (March 30, 2026), Cloudflare parsed 4,047 robots.txt files. Of those, 557 mentioned GPTBot (13.8%), 466 mentioned ClaudeBot (11.5%), 452 mentioned CCBot (11.2%), and 434 mentioned Google-Extended (10.7%).

Three patterns stand out.

GPTBot leads both blocking AND allowing. It's the most frequently mentioned AI crawler in robots.txt, period. Some sites block it, others explicitly allow it. That's because GPTBot is actually two things: a training crawler and a gateway to ChatGPT search results. Sophisticated site owners are starting to differentiate, blocking GPTBot (training) while allowing OAI-SearchBot (search). We'll come back to this.

Meta-ExternalAgent never appears in ALLOW rules. Every other major AI crawler shows up in both ALLOW and DISALLOW directives. Meta's bot is exclusively blocked or ignored. Nobody is going out of their way to welcome it. Given that it sends zero referral traffic (more on that below), this makes sense.

Googlebot is overwhelmingly allowed. 9.4% of ALLOW rules mention Googlebot compared to just 2.9% of DISALLOW rules, a 3.2x ratio favoring access. Website owners understand that blocking Googlebot means disappearing from search results entirely. No other bot gets this kind of preferential treatment.

External context: According to a BuzzStream study of top publishers, 79% of top news sites now block AI training bots via robots.txt, while only 14% of publishers block all AI bots completely. Our Cloudflare Radar data shows a similar selective blocking pattern across all industries, not just publishers.

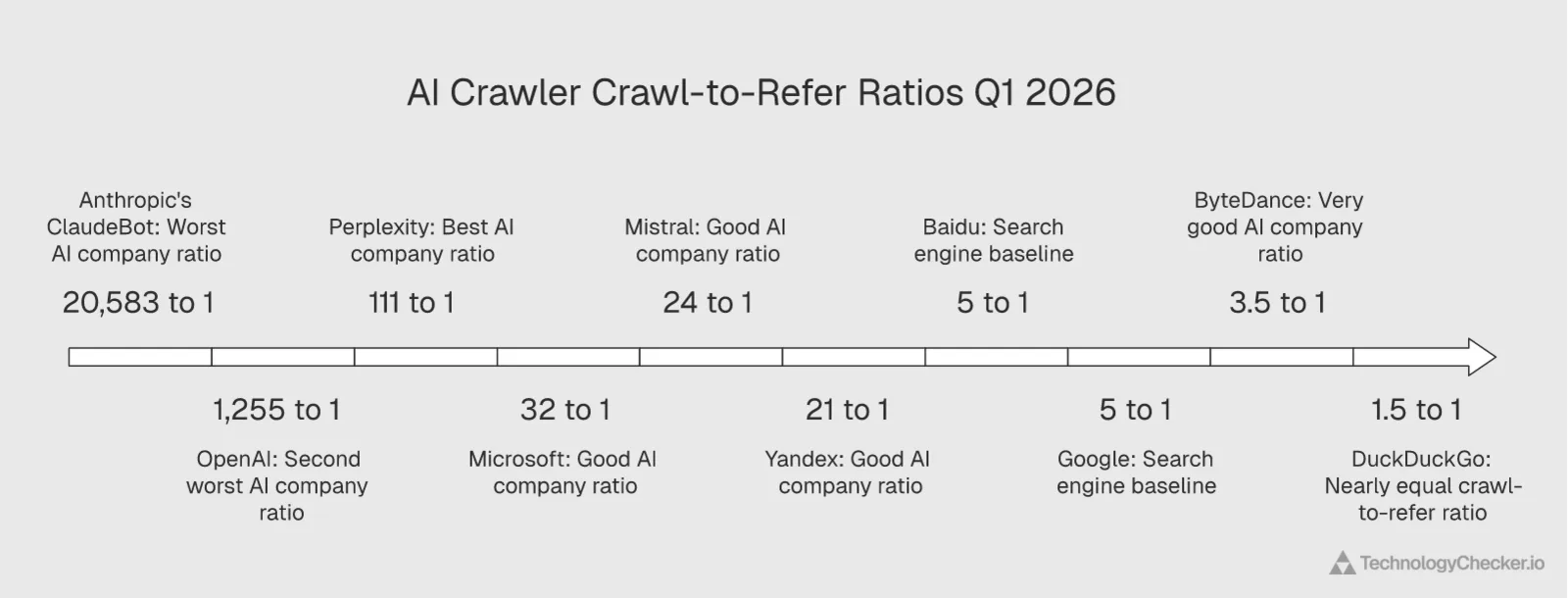

Why are websites blocking AI crawlers? The crawl-to-refer ratio tells the story

Blocking decisions aren't random. There's a straightforward economic calculation behind them: how much does this bot take from my site (crawl volume) versus how much does it send back (referral traffic)? Cloudflare Radar tracks this as a crawl-to-refer ratio.

Here's the Q1 2026 data:

| Operator | Crawl-to-Refer Ratio | Translation | Trend vs Q4 2025 |

|---|---|---|---|

| Anthropic | 20,583:1 | Crawls 20,583 pages per 1 referral sent | Worsened |

| OpenAI | 1,255:1 | Crawls 1,255 pages per 1 referral sent | Improved slightly |

| Perplexity | 111:1 | Crawls 111 pages per 1 referral sent | Stable |

| Microsoft | 32:1 | Crawls 32 pages per 1 referral sent | Stable |

| Mistral | 24:1 | Crawls 24 pages per 1 referral sent | — |

| Yandex | 21:1 | Crawls 21 pages per 1 referral sent | Stable |

| Baidu | 5.2:1 | Crawls 5.2 pages per 1 referral sent | Stable |

| 5.0:1 | Crawls 5 pages per 1 referral sent | Stable | |

| ByteDance | 3.5:1 | Crawls 3.5 pages per 1 referral sent | Stable |

| DuckDuckGo | 1.5:1 | Nearly 1-to-1 | Stable |

Read those numbers carefully.

Anthropic crawls 20,583 pages for every single referral it sends back. That's not a typo. ClaudeBot is the most aggressive pure consumer of web content relative to what it returns. For context, Google crawls 5 pages per referral. DuckDuckGo is nearly 1-to-1. Anthropic's ratio is 4,117x worse than Google's.

OpenAI is 1,255:1 — better than Anthropic but still extractive. The ratio has improved slightly since ChatGPT began sending referral traffic through its browse and search features, but it's still 251x worse than Google.

Perplexity is the best among dedicated AI companies at 111:1. That's still 22x worse than Google, but it's a fundamentally different model. Perplexity's entire product is a search engine that links to sources. The ratio reflects this.

The correlation between blocking and ratio is almost perfect. The bots with the worst crawl-to-refer ratios (Anthropic, OpenAI) are the most blocked in robots.txt. The bots with good ratios (Google, DuckDuckGo, ByteDance) are almost never blocked. The data tells website owners exactly what we'd expect: they tolerate crawling that sends traffic back, and they block crawling that doesn't.

Looking at the daily timeseries data, Anthropic's ratio was volatile throughout Q1, spiking above 100,000:1 on some days in January and gradually declining toward 10,000-15,000:1 by March. That's still astronomically high but trending in the right direction. If Anthropic wants to reduce blocking, improving this ratio is the single most effective thing they can do.

What to do with this insight: Use crawl-to-refer ratios as your primary decision metric. Block bots with ratios above 1,000:1 (training-focused crawlers). Allow bots under 200:1 (search-focused crawlers that return traffic). Review quarterly as ratios shift.

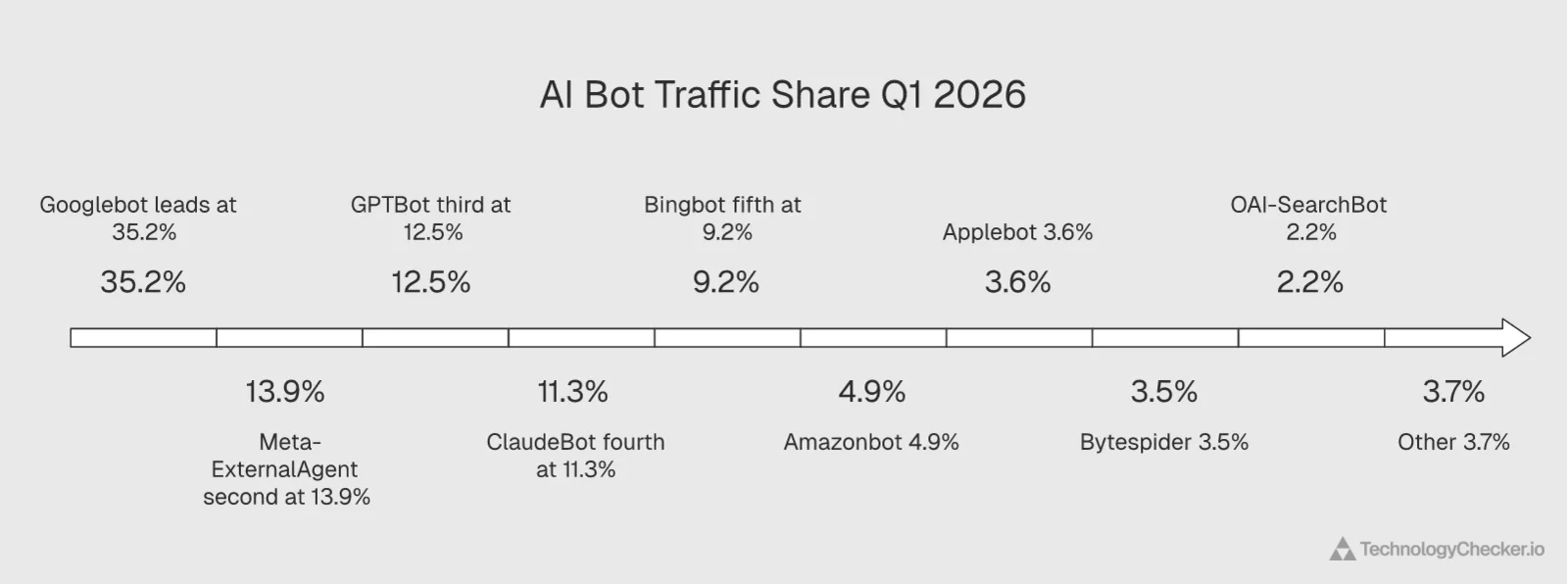

How much traffic do AI crawlers actually generate?

Before discussing whether to block or allow AI crawlers, you need to know how much traffic they actually generate. Here's the AI bot traffic breakdown for Q1 2026:

| AI Bot | Traffic Share | Operator | Primary Purpose |

|---|---|---|---|

| Googlebot | 35.2% | Search indexing + AI training | |

| Meta-ExternalAgent | 13.9% | Meta | AI training (zero referrals) |

| GPTBot | 12.5% | OpenAI | AI training + ChatGPT data |

| ClaudeBot | 11.3% | Anthropic | AI training |

| Bingbot | 9.2% | Microsoft | Search indexing + Copilot |

| Amazonbot | 4.9% | Amazon | Alexa + product data |

| Applebot | 3.6% | Apple | Siri + Apple Intelligence |

| Bytespider | 3.5% | ByteDance | AI training + TikTok data |

| OAI-SearchBot | 2.2% | OpenAI | ChatGPT search (sends referrals) |

| Other | 3.7% | Various | Various |

Googlebot alone generates 35.2% of all AI-categorized bot requests. This is critical context for the robots.txt discussion. Google operates in a dual role: it crawls for search indexing (which everyone wants) and for AI training through Google-Extended (which some want to block). The Google-Extended user agent lets site owners block the AI training component while keeping search indexing intact. 10.7% of analyzed robots.txt files already use this distinction.

Meta-ExternalAgent at 13.9% sends zero referral traffic. It's the second-highest volume AI crawler and returns nothing to publishers. Combined with its absence from ALLOW rules, the data paints a clear picture: Meta is extracting content at scale with no reciprocal benefit to site owners. According to SEOmator's analysis of Cloudflare data, Meta-ExternalAgent surged from 8.5% to 11.6% of global AI bot traffic between December 2025 and January 2026 alone, a 36% relative jump in 30 days.

OpenAI runs two separate bots. GPTBot (12.5%) handles training data collection, while OAI-SearchBot (2.2%) handles ChatGPT search queries that can send referral traffic. Forward-thinking site owners are blocking GPTBot while allowing OAI-SearchBot, getting ChatGPT search visibility without providing free training data. We see this pattern in the ALLOW data: OAI-SearchBot appears in 4.22% of ALLOW rules, nearly matching GPTBot's 5.65%.

And all of this sits within a broader context: 30.6% of all web traffic in Q1 2026 was bots. Not just AI crawlers, all bots combined. According to HUMAN Security's 2026 State of AI Traffic report, AI-driven traffic grew 187% in 2025, and agentic AI traffic specifically grew 7,851% year-over-year. The scale is accelerating.

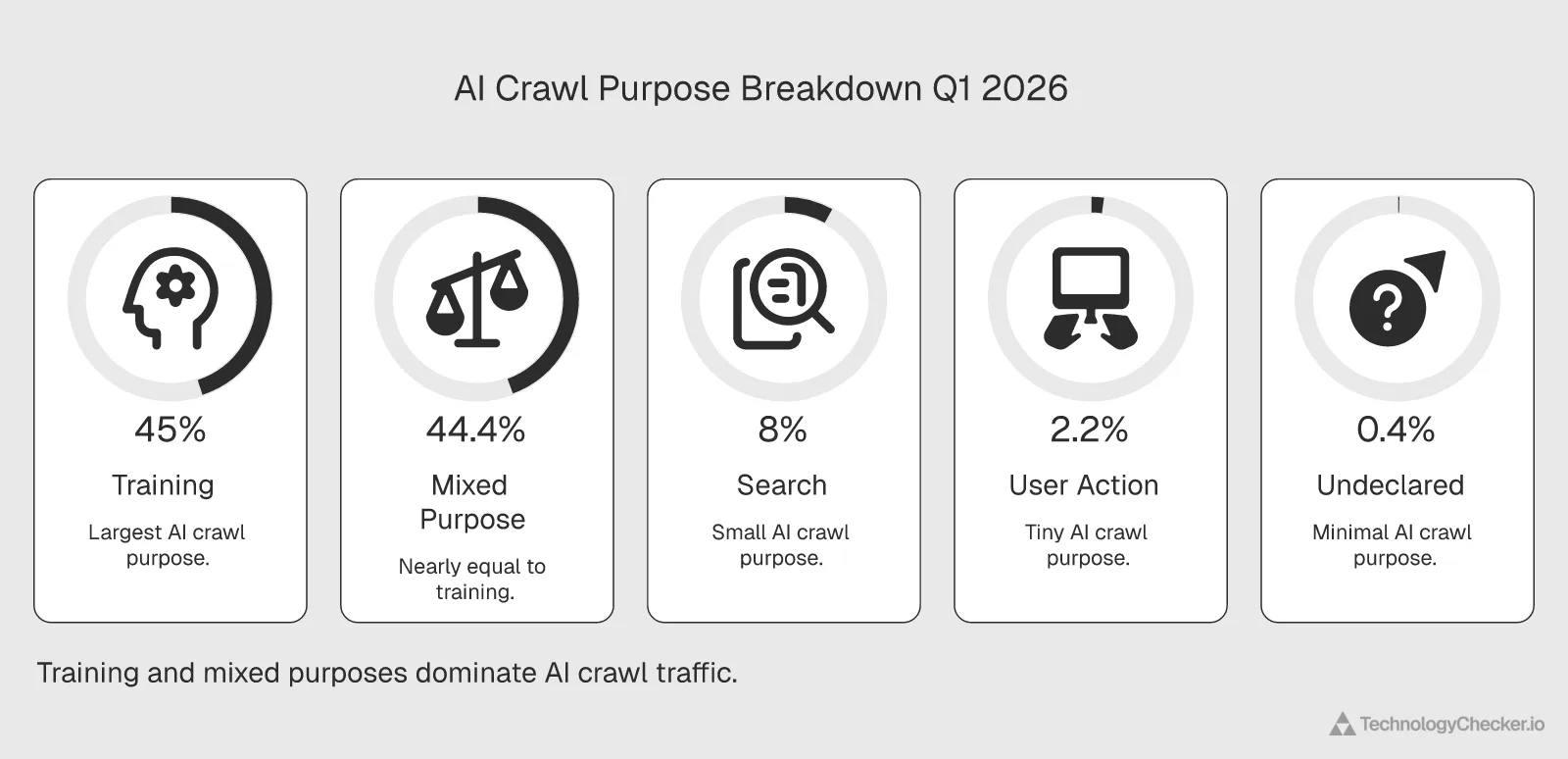

What are AI crawlers actually doing with your content?

Not all AI crawling is the same. Cloudflare Radar categorizes AI bot traffic by declared crawl purpose:

| Crawl Purpose | Share of AI Traffic | What It Means |

|---|---|---|

| Training | 45.0% | Collecting data to train language models |

| Mixed Purpose | 44.4% | Combines training, indexing, and product features |

| Search | 8.0% | Powering AI search products (Perplexity, ChatGPT Browse) |

| User Action | 2.2% | Fetching pages in response to real user queries |

| Undeclared | 0.4% | Purpose not specified in user agent |

89.4% of AI crawler traffic is training or mixed-purpose. Only 8% is search-related (which might send referral traffic back) and just 2.2% responds to actual user queries in real time.

This is the core of the robots.txt debate. If 89.4% of AI crawling is taking content to train models, not to serve it back to users who might click through, then the ROI for publishers is structurally negative. The crawl-to-refer ratios confirm this: platforms whose crawling is primarily training-focused (Anthropic, Meta) have the worst ratios. Platforms with significant search components (Perplexity, Google) have better ratios.

The 2.2% "User Action" category is the fastest-growing segment. These are bots like ChatGPT-User that visit pages when a user asks a question. They represent genuine, query-driven traffic that can generate real referrals. According to Cloudflare's own analysis, training now drives nearly 80% of AI bot activity, up from 72% a year ago. But the user-action slice grew 15x year-over-year according to Cloudflare's 2025 Year in Review, and the growth continued into Q1 2026. As AI assistants increasingly browse the web on behalf of users, this category will matter more.

What to do with this insight: Don't treat all AI crawlers as equal. Block training-focused bots (GPTBot, ClaudeBot, Meta-ExternalAgent, CCBot) while explicitly allowing search and user-action bots (OAI-SearchBot, ChatGPT-User, PerplexityBot). You'll block 89.4% of the extractive traffic while preserving the 10.2% that could send actual visitors.

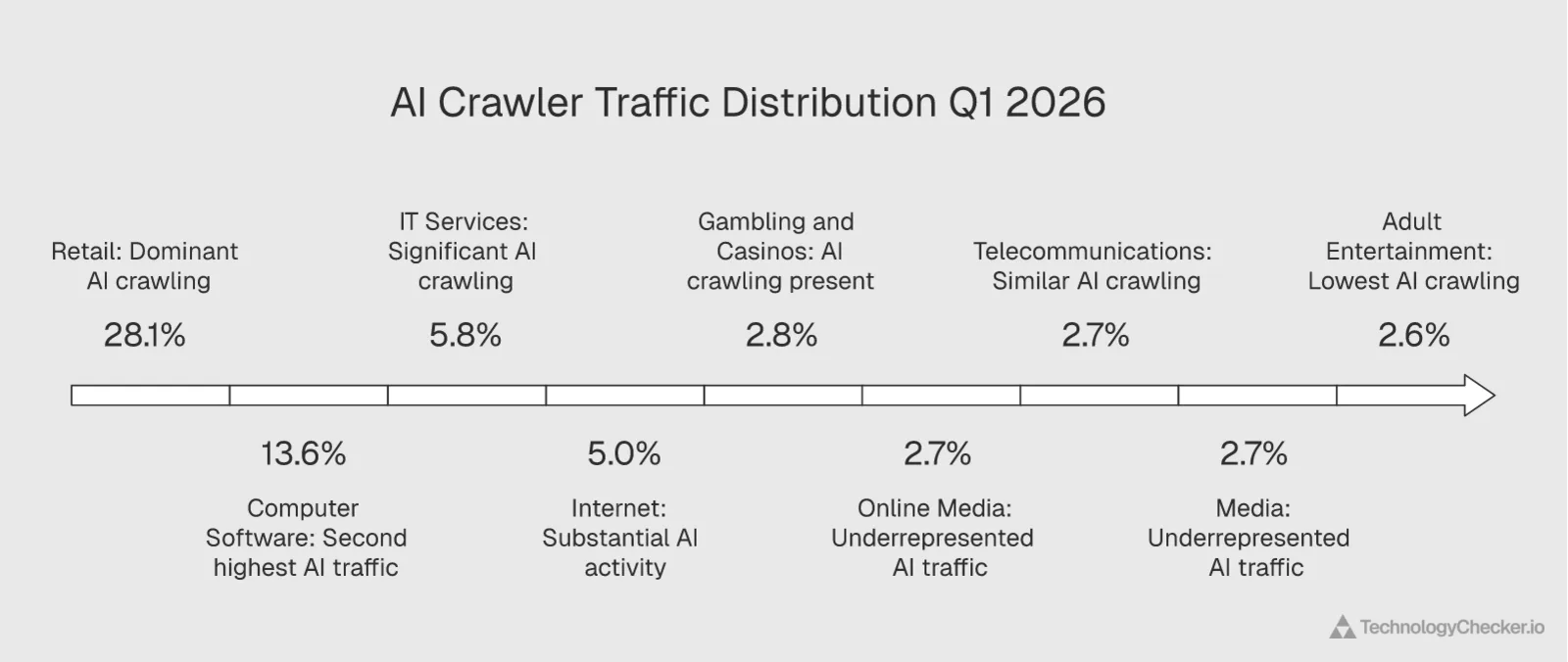

Which industries absorb the most AI crawling?

AI crawlers don't hit all industries equally. Here's where they spend their bandwidth:

| Industry | AI Crawler Traffic Share | All Crawler Traffic Share | AI Overweight? |

|---|---|---|---|

| Retail | 28.1% | 20.8% | Yes — disproportionately targeted |

| Computer Software | 13.6% | 17.3% | No — slightly under |

| IT Services | 5.8% | 5.5% | Neutral |

| Internet | 5.0% | 4.9% | Neutral |

| Gambling & Casinos | 2.8% | 6.5% | No — less AI crawling than average |

| Online Media | 2.7% | — | — |

| Telecommunications | 2.7% | 3.1% | Neutral |

| Media | 2.7% | 5.1% | No — less AI crawling than average |

| Marketing & Advertising | — | 6.4% | No — less AI crawling |

| Adult Entertainment | 2.6% | 4.0% | No — less AI crawling |

Retail takes 28.1% of AI crawler traffic, more than double any other industry. Product descriptions, pricing pages, reviews, and comparison content are exactly the kind of structured, factual data that language models consume voraciously. If you run an e-commerce site (like those built on Shopify), AI crawlers are likely your single largest non-human traffic source after Googlebot.

Computer Software at 13.6% makes sense. Documentation, API references, tutorials, and technical content are high-value training material for code-focused AI models.

Media is underrepresented at 2.7%. This is likely because media companies were among the first to aggressively block AI crawlers through robots.txt, and the blocking is working. Their share of AI crawler traffic is lower than their share of all crawler traffic (5.1%), suggesting the blocking is effectively reducing AI-specific crawling. HUMAN Security's data corroborates this pattern, showing that more than 95% of AI-driven traffic in 2025 was concentrated in retail and e-commerce, streaming and media, and travel and hospitality.

The domain category data from robots.txt tells the same story from the other side. Among the 4,047 robots.txt files Cloudflare parsed on March 30, Technology domains (910) and Business domains (798) had the most AI-specific DISALLOW rules. E-commerce (287) came third.

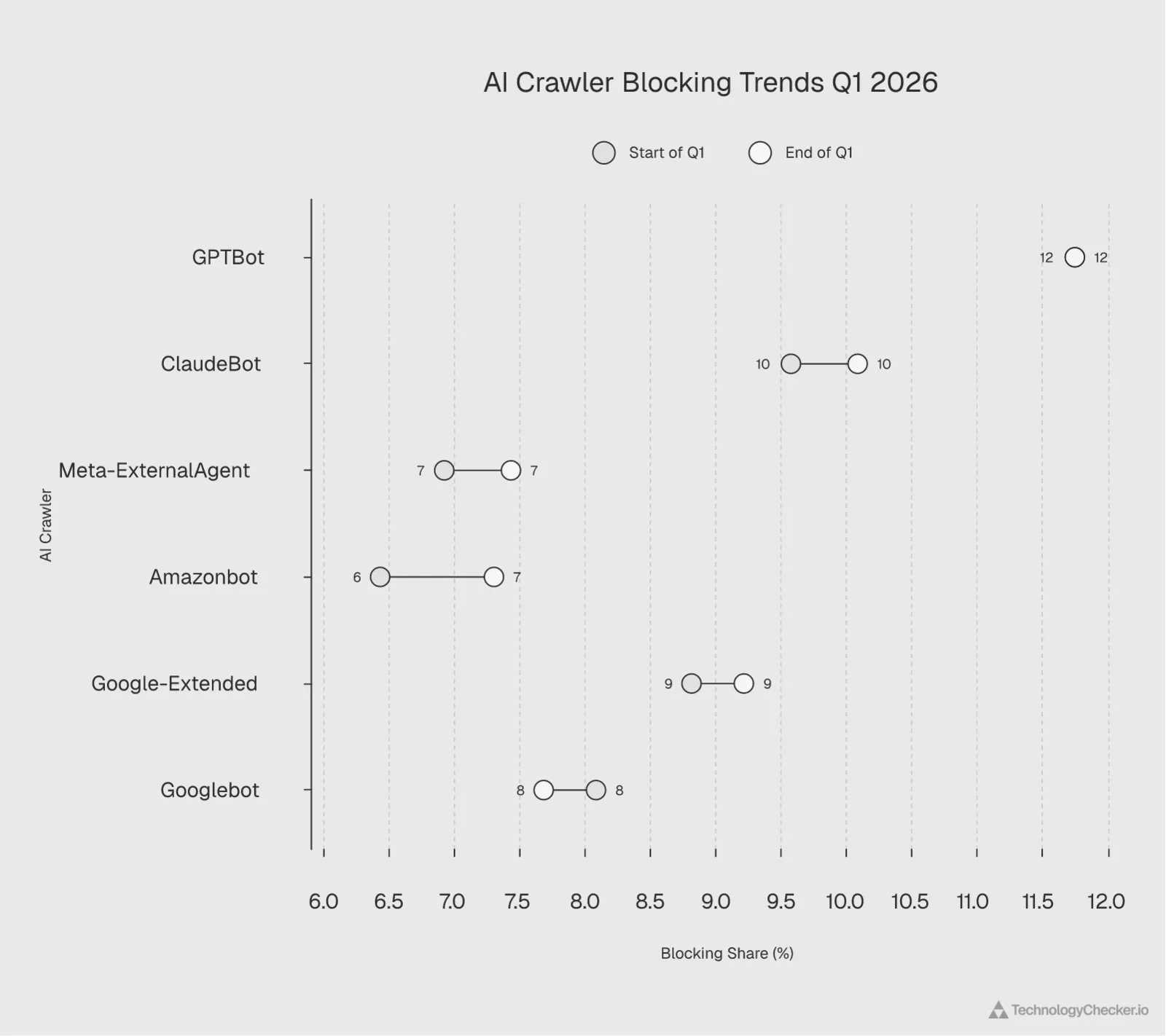

Is AI crawler blocking increasing?

Yes. We tracked the weekly share of DISALLOW rules for each AI crawler across the full 13 weeks of Q1 2026:

| AI Crawler | Jan 5 Share | Mar 30 Share | Q1 Change | Trend |

|---|---|---|---|---|

| GPTBot | 11.93% | 11.75% | -0.18pp | Flat (already peak) |

| CCBot | 10.59% | 10.41% | -0.18pp | Flat |

| ClaudeBot | 9.58% | 10.09% | +0.51pp | Growing fastest |

| Google-Extended | 8.82% | 9.22% | +0.40pp | Growing |

| Bytespider | 8.45% | 8.58% | +0.13pp | Flat |

| meta-externalagent | 6.93% | 7.44% | +0.51pp | Growing fastest |

| Amazonbot | 6.44% | 7.31% | +0.87pp | Growing fastest |

| Googlebot | 8.09% | 7.69% | -0.40pp | Declining |

| Applebot-Extended | 6.53% | 6.92% | +0.39pp | Growing |

Three trends are clear:

GPTBot blocking has plateaued. It was already the most blocked bot at the start of Q1 and stayed flat throughout. Most sites that intend to block GPTBot have already done so. According to a Search Engine Journal report on a Hostinger study, GPTBot's website coverage dropped from 84% to 12% over their study period, while OAI-SearchBot reached 55.67% average coverage. That gap tells you everything: sites are surgically blocking the training bot while welcoming the search bot.

ClaudeBot and meta-externalagent are catching up fast. Both gained +0.51 percentage points in Q1, the joint-fastest growth in DISALLOW rules. ClaudeBot's growth correlates directly with awareness of its terrible crawl-to-refer ratio. As more SEO professionals and publishers discover the 20,583:1 number, blocking will likely continue to accelerate.

Amazonbot blocking grew the most at +0.87pp. Amazonbot isn't typically discussed alongside GPTBot and ClaudeBot in the AI crawler conversation, but the data shows website owners are increasingly blocking it. This may reflect concerns about Amazon using crawled data to compete with the very retailers it crawls.

Googlebot blocking is declining. This is the only major crawler seeing reduced blocking, likely because website owners are learning to use the Google-Extended user agent to block Google's AI training specifically while keeping search indexing intact. They're switching from blunt Googlebot blocks to targeted Google-Extended blocks.

External context: An academic study published on arXiv found that AI-blocking by reputable sites increased from 23% in September 2023 to nearly 60% by May 2025, with reputable sites forbidding an average of 15.5 AI user agents. Meanwhile, misinformation sites prohibit fewer than one. The trend we're measuring in Q1 2026 is a continuation of this multi-year acceleration.

Which AI crawlers are explicitly welcomed?

The robots.txt story isn't only about blocking. Some AI crawlers are actively welcomed through explicit ALLOW rules:

| AI Crawler | ALLOW Share | DISALLOW Share | Net Direction |

|---|---|---|---|

| PerplexityBot | 5.16% | — | Strongly welcomed |

| ChatGPT-User | 4.76% | — | Strongly welcomed |

| OAI-SearchBot | 4.22% | — | Strongly welcomed |

| GPTBot | 5.65% | 5.52% | Split — some allow, some block |

| ClaudeBot | 4.24% | 4.88% | Slightly more blocked |

| Google-Extended | 4.29% | 4.44% | Slightly more blocked |

PerplexityBot, ChatGPT-User, and OAI-SearchBot are net positive. They appear in ALLOW rules without significant DISALLOW presence. All three have something in common: they're search or user-action bots that send referral traffic back to publishers. The robots.txt data confirms what the crawl-to-refer ratios suggest, sites are willing to give access to bots that return traffic.

GPTBot is genuinely split. Nearly identical ALLOW and DISALLOW percentages mean the internet is divided on OpenAI's training crawler. This probably reflects the messy reality that GPTBot serves dual purposes, and different sites weigh those purposes differently.

This is where sophisticated operators are getting granular. Instead of a blanket block on all OpenAI crawlers, they're writing robots.txt rules that block GPTBot (training) while explicitly allowing OAI-SearchBot (search) and ChatGPT-User (user action). That's the data-informed approach.

The complete list of known AI crawler user-agent strings

One of the biggest challenges in managing robots.txt AI crawlers is simply knowing which user-agent strings to target. AI companies don't always publicize their bots, and new ones appear regularly.

Here's the most current list of AI crawler user-agent strings, compiled from our crawling infrastructure's observations, Cloudflare Radar data, and the community-maintained ai-robots-txt GitHub repository:

Training crawlers (high crawl, low/zero referrals)

| User-Agent | Operator | Purpose |

|---|---|---|

| GPTBot | OpenAI | Model training data |

| ClaudeBot | Anthropic | Model training data |

| Claude-Web | Anthropic | Model training data |

| Meta-ExternalAgent | Meta | AI training (zero referrals) |

| CCBot | Common Crawl | Open dataset for AI training |

| Google-Extended | Gemini / AI training | |

| Bytespider | ByteDance | AI training + TikTok |

| Amazonbot | Amazon | Alexa + AI features |

| Applebot-Extended | Apple | Apple Intelligence training |

| Diffbot | Diffbot | Web data extraction |

| FacebookBot | Meta | AI training |

| Omgilibot | Webz.io | Data mining |

| cohere-ai | Cohere | Model training |

| AI2Bot | Allen AI | Research model training |

| Kangaroo Bot | Kangaroo LLM | Model training |

| Timpibot | Timpi | Decentralized search training |

| VelenPublicWebCrawler | Velen | Model training |

| Webzio-Extended | Webz.io | Extended data collection |

| iaskspider | iAsk.ai | AI training |

Search and user-action crawlers (send referral traffic)

| User-Agent | Operator | Purpose |

|---|---|---|

| OAI-SearchBot | OpenAI | ChatGPT search results |

| ChatGPT-User | OpenAI | Real-time user queries |

| PerplexityBot | Perplexity | AI search engine |

| Bingbot | Microsoft | Search + Copilot |

| Googlebot | Search indexing | |

| YouBot | You.com | AI search |

This distinction matters. We've seen site owners paste a "block all AI crawlers" snippet into their robots.txt without realizing they're also blocking the search bots that actually send visitors. Use the training vs. search categorization above to make targeted decisions.

Common mistakes with AI crawler directives

After scanning millions of robots.txt files through our technology detection pipeline, I've seen the same mistakes repeatedly. Here are the five most common, with fixes.

Mistake 1: Blocking all OpenAI bots instead of just the training bot

# Wrong — blocks search referrals too

User-agent: GPTBot

Disallow: /

User-agent: OAI-SearchBot

Disallow: /

User-agent: ChatGPT-User

Disallow: /

# Right — blocks training, allows search

User-agent: GPTBot

Disallow: /

User-agent: OAI-SearchBot

Allow: /

User-agent: ChatGPT-User

Allow: /

OpenAI operates three distinct user-agent strings. GPTBot handles training data collection. OAI-SearchBot powers ChatGPT search results and actually sends referral traffic back. ChatGPT-User fetches pages in real time when users ask questions. Blocking all three costs you search visibility with zero upside. According to a Reuters Institute study, 48% of the most widely used news websites across ten countries block OpenAI's crawlers, but many don't differentiate between the training and search bots.

Mistake 2: Placing AI bot rules after a wildcard disallow

# Wrong — wildcard catches everything first

User-agent: *

Disallow: /private/

User-agent: GPTBot

Disallow: /

Some robots.txt parsers process rules differently. The spec says the most specific user-agent match wins, but not all crawlers follow the spec perfectly. Always test your configuration with Google's robots.txt tester to verify your rules work as intended.

Mistake 3: Forgetting that robots.txt is case-sensitive for paths

The user-agent field is case-insensitive, but paths in Disallow and Allow directives are case-sensitive. Disallow: /Blog/ won't block access to /blog/. Double-check your actual URL paths.

Mistake 4: Assuming robots.txt blocks indexing

robots.txt blocks crawling, not indexing. If other sites link to your pages, search engines can still index the URLs without crawling the content. If you need to prevent indexing entirely, use a noindex meta tag or X-Robots-Tag HTTP header instead.

Mistake 5: Not updating rules as new AI bots appear

AI companies launch new crawlers regularly. A robots.txt written in 2024 that blocks GPTBot and ClaudeBot misses meta-externalagent, Bytespider, Amazonbot, Applebot-Extended, and dozens of others. Review your robots.txt quarterly. The ai-robots-txt GitHub repository is a good reference for staying current.

robots.txt limitations: when to use firewall rules instead

Here's something most guides won't tell you plainly: robots.txt is voluntary. It's a set of suggestions, not a technical barrier. Any crawler can read your robots.txt and choose to ignore it completely.

According to a UC San Diego research paper, 77% of participants surveyed had never even heard of robots.txt. And among AI companies, compliance with robots.txt varies. Multiple reports have documented AI crawlers accessing content that robots.txt explicitly blocks.

This means robots.txt should be your first line of defense but not your only one. Here's how the options compare:

| Method | Blocks Compliant Bots | Blocks Non-Compliant Bots | Implementation Difficulty | Risk |

|---|---|---|---|---|

| robots.txt | Yes | No | Low (text file) | None |

| Cloudflare WAF rules | Yes | Yes | Medium | May block legitimate users if misconfigured |

| Rate limiting | Partial | Yes | Medium | May affect site performance |

| User-agent blocking (server-side) | Yes | Yes (if detected) | Medium-High | Spoofed user agents bypass it |

| Bot management solutions | Yes | Yes | High (cost + setup) | Most effective but expensive |

When robots.txt is enough

- You're blocking well-known, compliant bots (GPTBot, ClaudeBot, Googlebot)

- Your goal is to communicate policy, not enforce it technically

- You want to control search engine AI training without losing search indexing

When you need firewall rules

- You've identified bots ignoring your robots.txt

- Your server costs are spiking from aggressive crawling

- You need to protect high-value content (pricing pages, proprietary data)

- You're dealing with bots that disguise their user-agent strings

Cloudflare Turnstile and similar tools add a verification layer that bots can't bypass by simply ignoring robots.txt. For most mid-market sites, the combination of robots.txt for policy signaling and a WAF for enforcement gives you the best coverage.

llms.txt vs robots.txt: a new standard for AI-specific instructions

While robots.txt was designed for search engine crawlers in the 1990s, a new file called llms.txt has emerged specifically for communicating with large language models. It's worth understanding the difference and when you might use both.

robots.txt uses the Robots Exclusion Protocol to tell crawlers which URLs they can access. It was built for search engines that crawl, index, and link back to your content. The format is technical: User-agent strings, Allow/Disallow directives, Sitemap references.

llms.txt is a proposed convention for providing AI-readable information about your site. Instead of restricting access, it describes your content in a structured way that LLMs can use. Think of it as a site map written for AI understanding rather than search engine indexing.

| Feature | robots.txt | llms.txt |

|---|---|---|

| Purpose | Control crawl access | Describe site content for AI |

| Format | User-agent + Disallow/Allow | Markdown-like, human readable |

| Enforceability | Voluntary (standard since 1994) | Voluntary (emerging, no standard body) |

| AI training control | Yes (via specific user agents) | No (descriptive, not restrictive) |

| Search impact | Direct (blocks crawling) | None (informational only) |

| Adoption | Widespread | Early stage |

Here's the practical takeaway: robots.txt and llms.txt serve different functions. robots.txt controls access. llms.txt describes content. If your priority is blocking AI training crawlers, robots.txt is still the tool. If you want to help AI systems understand your site structure and cite you accurately, llms.txt adds value on top.

We're monitoring llms.txt adoption across our 50M+ domain dataset and will publish adoption numbers once the sample is statistically meaningful. For now, focus your energy on getting robots.txt right since it has direct, measurable impact today.

A data-driven framework for robots.txt decisions

Based on everything we've analyzed, here's a decision framework for every major AI crawler:

| Crawler | Recommendation | Rationale |

|---|---|---|

| GPTBot (OpenAI) | Block unless you want ChatGPT training visibility | 1,255:1 ratio. Block GPTBot, allow OAI-SearchBot separately. |

| ClaudeBot (Anthropic) | Block | 20,583:1 ratio. Near-zero referral ROI. |

| Meta-ExternalAgent (Meta) | Block | Zero referrals. Pure training extraction. |

| Bytespider (ByteDance) | Block | Low referral return. Training-focused. |

| CCBot (Common Crawl) | Block unless you value open datasets | Used by many AI companies indirectly. |

| Google-Extended | Block | Blocks Google's AI training while keeping search indexing. Best of both worlds. |

| OAI-SearchBot (OpenAI) | Allow | Handles ChatGPT search — sends referral traffic. |

| ChatGPT-User (OpenAI) | Allow | User-action bot — responds to real queries. |

| PerplexityBot (Perplexity) | Allow | 111:1 ratio — best among dedicated AI companies. |

| Bingbot (Microsoft) | Allow | 32:1 ratio. Search indexing + Copilot. Acceptable trade-off. |

| Googlebot | Always allow | 5:1 ratio. Blocking means search invisibility. |

Here's what a data-informed robots.txt looks like in practice:

# Block AI training crawlers (high crawl-to-refer ratio)

User-agent: GPTBot

Disallow: /

User-agent: ClaudeBot

Disallow: /

User-agent: Claude-Web

Disallow: /

User-agent: Meta-ExternalAgent

Disallow: /

User-agent: Bytespider

Disallow: /

User-agent: CCBot

Disallow: /

User-agent: Google-Extended

Disallow: /

User-agent: Amazonbot

Disallow: /

User-agent: Applebot-Extended

Disallow: /

User-agent: cohere-ai

Disallow: /

User-agent: Diffbot

Disallow: /

User-agent: Omgilibot

Disallow: /

User-agent: FacebookBot

Disallow: /

# Allow AI search and user-action bots (send referral traffic)

User-agent: OAI-SearchBot

Allow: /

User-agent: ChatGPT-User

Allow: /

User-agent: PerplexityBot

Allow: /

# Always allow search engines

User-agent: Googlebot

Allow: /

User-agent: bingbot

Allow: /

This approach blocks the vast majority of training crawling while preserving the bots that could send actual referral traffic back to your site.

2026 AI crawler benchmarks at a glance

Here are the key benchmarks from our Q1 2026 analysis, consolidated for quick reference:

| Benchmark | Value | Source | Notes |

|---|---|---|---|

| Most blocked AI crawler | GPTBot (5.52% of DISALLOW rules) | Our Cloudflare Radar analysis | Followed by CCBot (5.08%) |

| Worst crawl-to-refer ratio | Anthropic — 20,583:1 | Our Cloudflare Radar analysis | 4,117x worse than Google |

| Best AI-company crawl ratio | Perplexity — 111:1 | Our Cloudflare Radar analysis | Search model returns traffic |

| Training + mixed traffic share | 89.4% of AI crawling | Our Cloudflare Radar analysis | Only 10.2% is search/user action |

| Bot traffic as % of all web | 30.6% | Our Cloudflare Radar analysis | AI crawlers are a growing share |

| AI traffic growth (2025 YoY) | 187% | HUMAN Security report | Agentic AI traffic up 7,851% |

| Top news sites blocking AI bots | 79% | BuzzStream study | 14% block all bots, 18% block none |

| Retail share of AI crawling | 28.1% | Our Cloudflare Radar analysis | More than double any other industry |

| Fastest-growing DISALLOW bot | Amazonbot (+0.87pp in Q1) | Our Cloudflare Radar analysis | ClaudeBot and Meta tied at +0.51pp |

| GPTBot website coverage decline | 84% down to 12% | Search Engine Journal / Hostinger | OAI-SearchBot reached 55.67% |

What this means for technology intelligence

The robots.txt arms race creates a growing problem for any data product that relies on web crawling. As more sites block more bots, crawling-dependent tools develop blind spots.

At TechnologyChecker, we've been dealing with this reality since day one. Our technology detection pipeline uses multi-signal detection specifically because we knew crawling access would tighten:

- DNS record analysis — Certificate Authority data reveals cloud providers (we tracked 10.9 billion certificates in Q1 2026), CNAME records reveal CDN and hosting infrastructure, MX records reveal email providers

- HTTP header fingerprinting — Server headers, X-Powered-By headers, and security headers reveal backend technologies without needing to render page content

- JavaScript signature detection — Library fingerprints in public assets identify frontend frameworks

- Historical baseline data — 20 years of technology adoption patterns let us detect changes even when current access is restricted

The robots.txt data we've analyzed in this report quantifies what we've observed operationally: the open web is closing. The 13.8% of sites that already block GPTBot will likely double within a year. Crawl-to-refer ratios will be the metric that determines whether a bot gets access or gets blocked.

Technology intelligence tools that depend on a single data collection method, whether that's crawling, browser extensions, or job posting analysis, will face increasing gaps. The sites that are blocking most aggressively (Technology at 910 DISALLOW rules, Business at 798) are exactly the sites that B2B sales teams need intelligence on. That makes multi-signal detection not just a nice-to-have but a requirement for accurate technographic data.

Frequently Asked Questions

Is there a robots.txt for AI?

Yes. The standard robots.txt file is the primary mechanism for controlling AI crawler access. You specify AI-specific user-agent strings (GPTBot, ClaudeBot, Meta-ExternalAgent, etc.) with Disallow directives. There's also an emerging llms.txt standard designed specifically for communicating with large language models, but it's descriptive rather than restrictive. For blocking AI training crawlers, robots.txt remains the established and widely supported approach.

Is robots.txt a legal thing?

robots.txt itself isn't a law. It's a technical protocol, a set of instructions that well-behaved crawlers follow voluntarily. However, courts have considered robots.txt as evidence of a website's expressed wishes regarding access. In several legal disputes involving web scraping, including cases against AI companies, robots.txt policies have been cited as proof that content was accessed against the site owner's stated terms. It's a policy signal, not a legal contract, but it carries weight in court.

Is robots.txt a vulnerability?

Not a security vulnerability in the traditional sense, but it can expose information. robots.txt files are publicly readable, and Disallow paths can reveal the existence of directories or files you might not want exposed (like /admin/, /staging/, or /internal-api/). Never rely on robots.txt as a security measure. Use proper authentication, access controls, and server-side rules to protect sensitive content. robots.txt is for managing crawler behavior, not securing your site.

How do you block AI crawlers in robots.txt in 2026?

Add specific User-agent and Disallow directives for each AI crawler you want to block. In 2026, you need to target at least 10-15 user-agent strings to cover the major AI crawlers. We recommend using the data-driven framework in this article: block training bots (GPTBot, ClaudeBot, Meta-ExternalAgent, CCBot, Bytespider, Google-Extended, Amazonbot) while allowing search bots that return traffic (OAI-SearchBot, ChatGPT-User, PerplexityBot). The full robots.txt code example earlier in this post is ready to copy and deploy.

Should I allow or block GPTBot and ClaudeBot?

The data suggests blocking both for most websites. GPTBot has a 1,255:1 crawl-to-refer ratio, meaning it crawls 1,255 pages for every referral it sends back. ClaudeBot is far worse at 20,583:1. However, if you block GPTBot, make sure you separately allow OAI-SearchBot, which handles ChatGPT search and actually sends referral traffic (it appears in 4.22% of ALLOW rules in our data). There's no similar search-specific bot from Anthropic, so blocking ClaudeBot has no downside for referral traffic. The one exception: if you specifically want your content reflected in ChatGPT or Claude responses, allowing training access is the trade-off.

Our Methodology

This analysis uses data from multiple Cloudflare Radar API endpoints, which aggregate traffic patterns across Cloudflare's global network spanning 330 cities in 125+ countries. Cloudflare processes over 81 million HTTP requests per second and 67 million DNS queries per second, providing one of the most extensive views of global internet activity available.

Data period: January 1 through March 31, 2026 (Q1 2026)

Geographic scope: Global

Endpoints used:

- robots.txt analysis (

get_robots_txt_data) — DISALLOW and ALLOW directive distributions by user agent, domain category breakdowns, weekly timeseries trends. Sample: 4,047 robots.txt files parsed on March 30, 2026. - Crawl-to-refer ratios (

get_bots_crawlers_data) — Ratio of crawl requests to referral traffic by bot operator, with daily timeseries. - AI bot traffic (

get_ai_data) — Traffic share by user agent, crawl purpose classification, industry targeting. - HTTP traffic (

get_http_data) — Human vs bot traffic split.

Limitations: robots.txt percentages represent the share of directives across Cloudflare's monitored domains, not absolute blocking rates across all internet domains. Crawl-to-refer ratios can vary significantly day-to-day, and daily extremes (such as Anthropic's spikes above 100,000:1) may reflect transient crawl bursts rather than sustained behavior. Some AI crawlers may not identify themselves accurately in user agent strings, which means true crawl volumes could be higher. This analysis also doesn't account for sites using server-side blocking or WAF rules that never appear in robots.txt.

We plan to update this analysis quarterly. If you'd like to see how AI crawler blocking affects technology detection accuracy across your target accounts, explore our platform rankings data or check how cloud provider traffic share interacts with bot management at the infrastructure level.

Data source: Cloudflare Radar API (radar.cloudflare.com) Analysis and insights by TechnologyChecker.io

David Thomson

CTO